Working with Which? (aka the Consumers’ Association), we used our new tool FractalScan Surface to survey the online attack surfaces of 25 big UK companies across five different sectors. You can read the Which? article here, which puts the findings into context for consumers; we wanted to provide some more technical details on our approach and the findings.

Background

We’ve worked with Which? before, on spam text messages and reviewing ISP routers, so when Andrew asked if we could help with a new piece of research we were keen to help out. This was not paid work for us; we are happy to partner with Which? on important investigations, and it was a great opportunity to test our tool on some big companies.

Approach

Given we were working with the Consumers’ Association, we wanted to pick a range of companies who provide consumer products and services, and went with the banks, airlines, energy and water utilities, and supermarkets. To keep it manageable we went for five companies from each group. We wanted the five biggest companies in each group, and figured that any selection should be broadly representative. We made the choices pretty quickly, so don’t infer anything from omissions or inclusions.

Enumeration

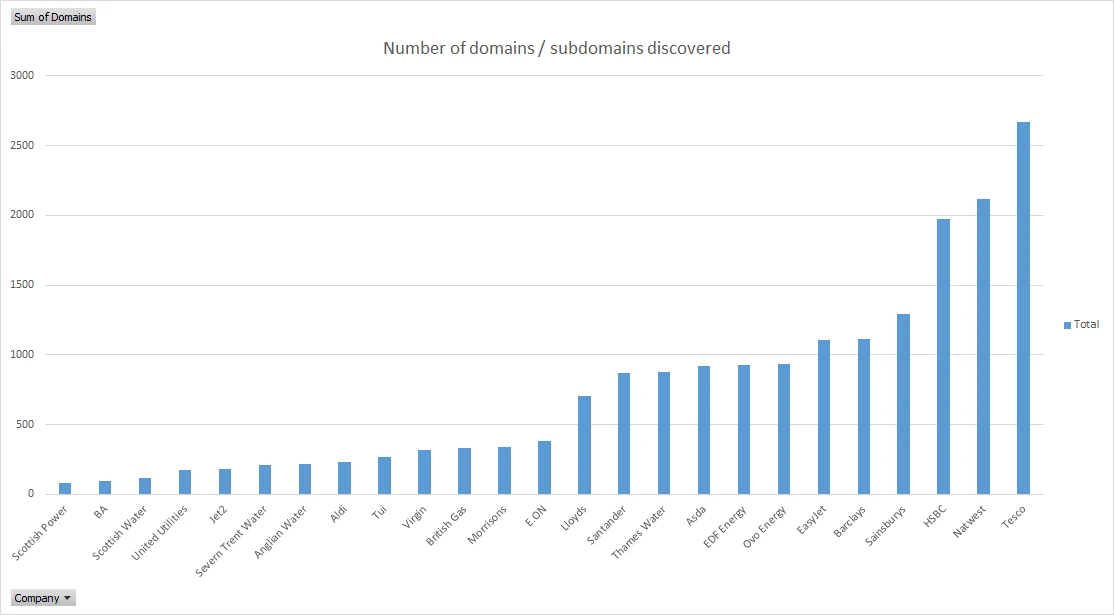

We used our own attack surface tool, FractalScan Surface. It takes domains or IP addresses as initial seeds. In this case we just entered the main UK domain for each company, and let FractalScan Surface do its thing to find respective subdomains. It does a great job on subdomains; the companies, primary domains, and number of subdomains discovered are all shown in the table below:

These are just subdomains of the main domain, so the companies’ approach to domains will have an effect. For example, if the original domain is supermarket.com then we’ll find banking.supermarket.com, but not supermarketbanking.com. For more in-depth research we’d make the effort to add more high-level domains as seeds, but we didn’t have the time to do that here, and sticking to a single seed for each company is consistent.

We mostly tried very hard to be consistent in our approach for all of the companies, to the extent that we left out some results we’d found manually because we didn’t want to have to do the same for every company.

Testing

To enable FractalScan Surface to comply with the Computer Misuse Act we have to be passive, as we don’t have permission from the included companies to do anything active. This means the FractalScan Surface results are limited to a subset of all possible vulnerabilities and risks that may exist for a target company, specifically those that we can discover with public domain records and websites. These largely consist of:

- Issues with expired or lapsed domain registration.

- Expired TLS certificates or non-TLS websites.

- Improper email configuration i.e. problems with SPF, DKIM and DMARC configuration (our favourite topic).

- Out of date components in public websites.

An important point to note is that attackers aren’t necessarily limited by CMA, so there are likely other issues that we couldn’t find but a malicious attacker could.

We’ve been working very hard lately to reduce the possibility of false-positive results in FractalScan, so we’re confident about the results. Where we could, we did some manual verification of the higher-severity risks.

The results

Overall

So how did they all do? We found potential security and misconfiguration issues for all of them, which is pretty typical for large organisations.

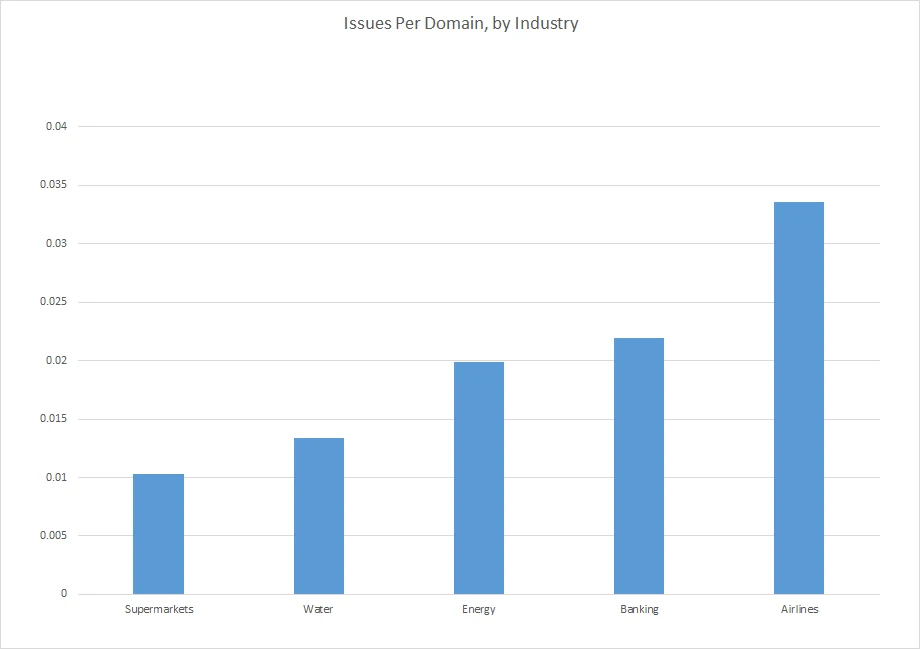

For scoring, we could just count up the total number of issues per company, and score them overall. But that doesn’t take into account the number of assets they have. Here’s a graph of the (critical/high) issues per domain, which is more of a measure of how well they’re doing per asset:

Many people won’t be surprised to see the airlines doing the worst, given their long history of data breaches and IT fiascos. On the positive side, even for them we’re only talking about 1 issue for every 30ish domains.

But there’s a big variable here that needs considering: complexity. Everything else being equal, is it better to have 10 vulnerabilities in 10 assets, or 10 vulnerabilities in 100 assets? You can probably argue either way, but what does seem clear is that it’s harder to manage and maintain 100 assets than it is 10, so maybe the former is better

By issue

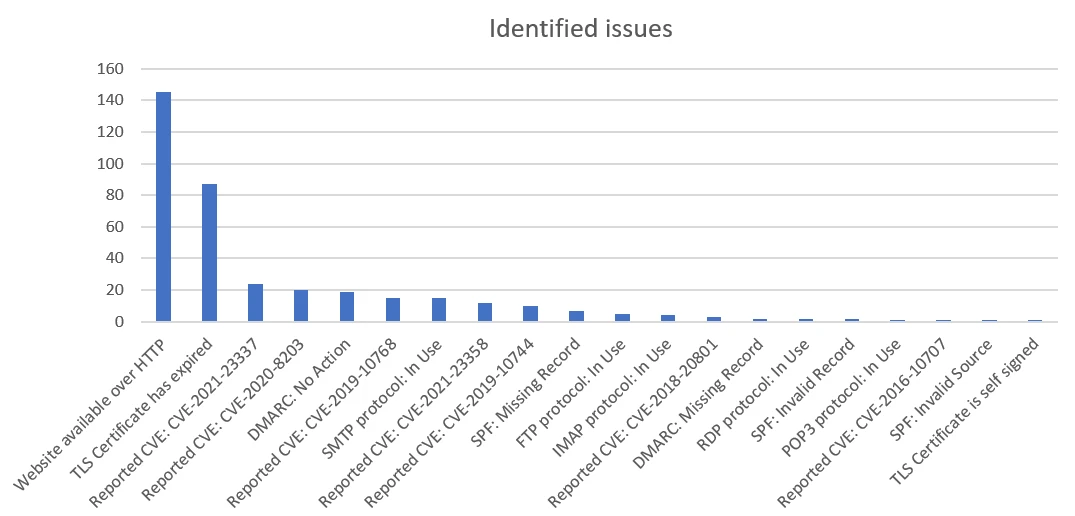

The break down of issues is shown in the graph below:

We broadly have the four categories mentioned above.

The two most common really should be solved problems: websites available over plaintext HTTP, and expired certificates. Oddly, for expired certificates there were a couple of big offenders amongst the banks, which when combined accounted for 45 of the 84 expired certificates found.

All of the CVEs relate to out-of-date website components, specifically Javascript components such as Angular, Lodash, and Underscore.

There’s quite a few old plaintext protocols out there. To avoid false positives, where we spotted an open port for POP3/IMAP/SMTP we did use openssl to check whether it’s offering startTLS.

We’ll not say much on the email errors, as that’s the topic of a future blog.

Feedback

As ever with this sort of work, we got some mixed feedback. It’s easy to understand a default defensive reaction when someone points out potential issues with your stuff, especially when it’s arrived unprompted. So some of the companies were initially very defensive, and in a couple of occasions outright dismissive. But in general we had positive engagement, varying from a general commitment to fixing the agreed issues, to them providing detailed feedback on the reported issues.

Of those five companies who provided proper numbers for what they’ve done in their response, we could tally 20 decommissioned sites, 3 sites as “updated”, 10 “resolved” already and 4 “in progress” (updated and resolved may mean the same thing, they came from different people).

There were some genuine false positives, which we expected, as we can’t conclusively prove some issues without active testing or knowledge of the target systems. We’ve taken a few things onboard, and have some ideas in the pipeline to refine some of how FractalScan Surface works.

In some cases things had changed in between us doing the scan and the companies looking at the data. And in some cases we disagreed with their views on whether certain issues were valid, and vice versa. That is also to be expected, particularly when it comes to the severity of findings. But many companies reported on how they’d fixed issues, updated configurations and shut down old sites. Which was really the whole point of the exercise.

Interesting examples

Here we’ve included a few (somewhat blurred) screenshots of some of the more interesting examples of the issues found.

Visible admin pages

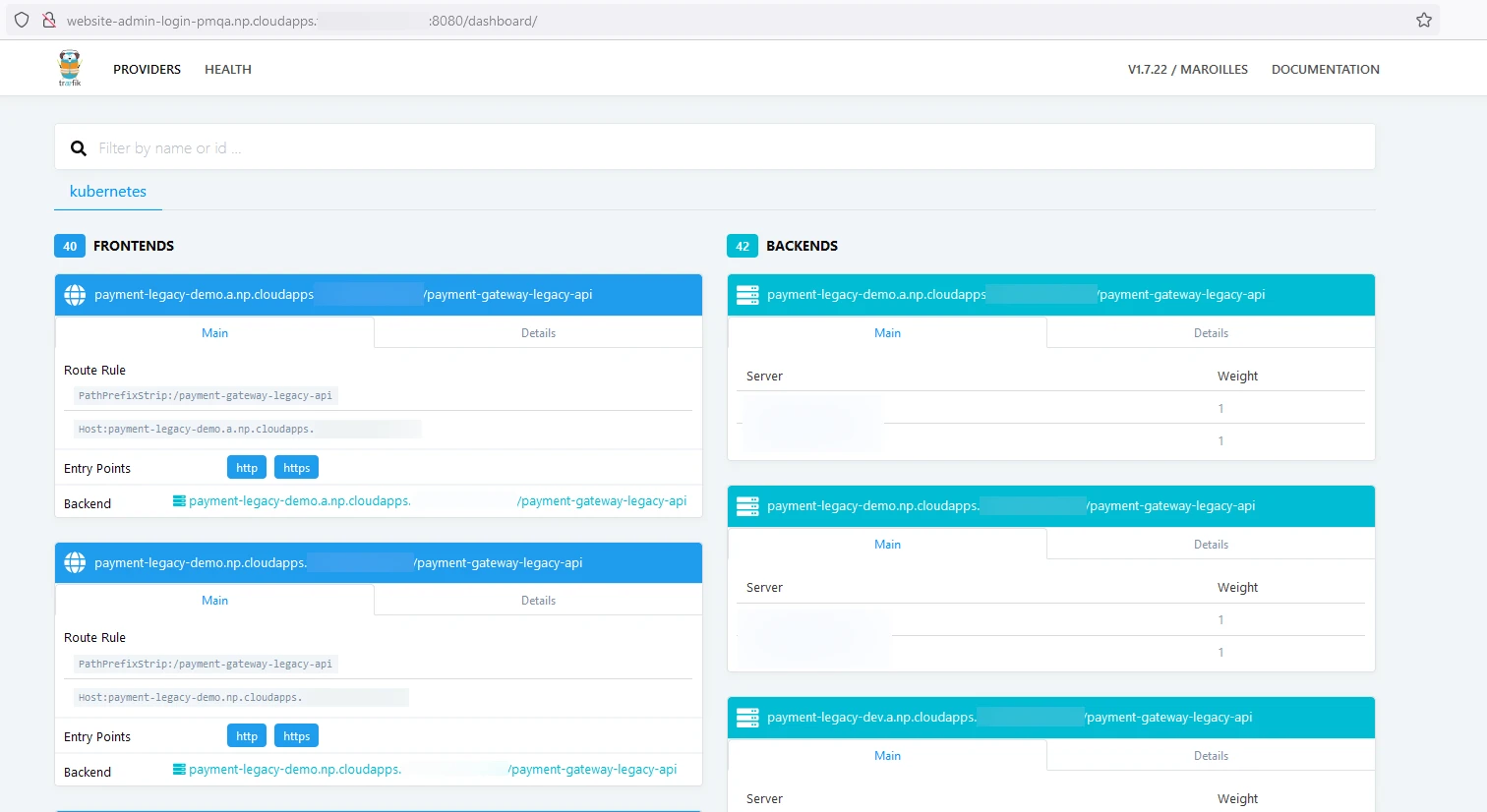

Here’s a classic: an internet-visible management interface for some cloud infrastructure:

It’s unlikely to be a risk in itself, but it’s normally a bad idea to make details of your backend and infrastructure readily available, especially as this seems to be something for a payment API.

Expired TLS certificates and HTTP websites

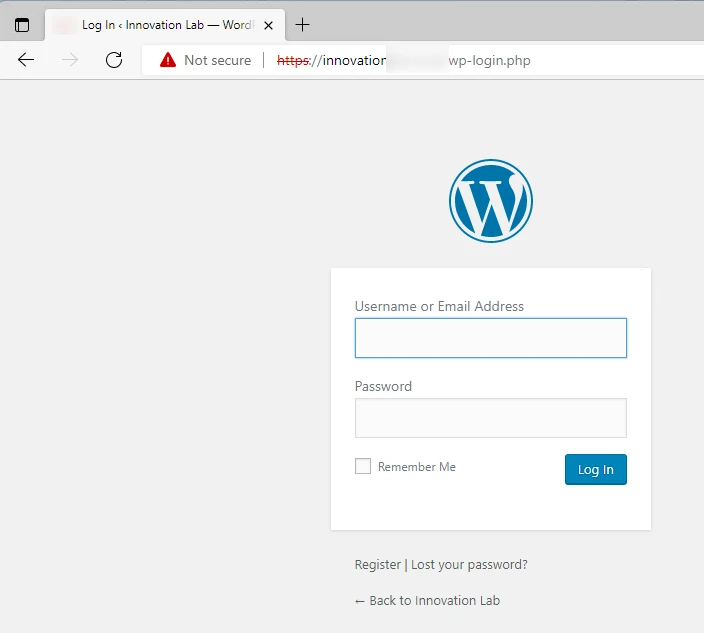

We could have chosen from a whole bunch of these, but as one example here’s a Wordpress admin page available over un-encrypted HTTP:

Logins to this page will happen in plain-text, which is obviously a bad thing.

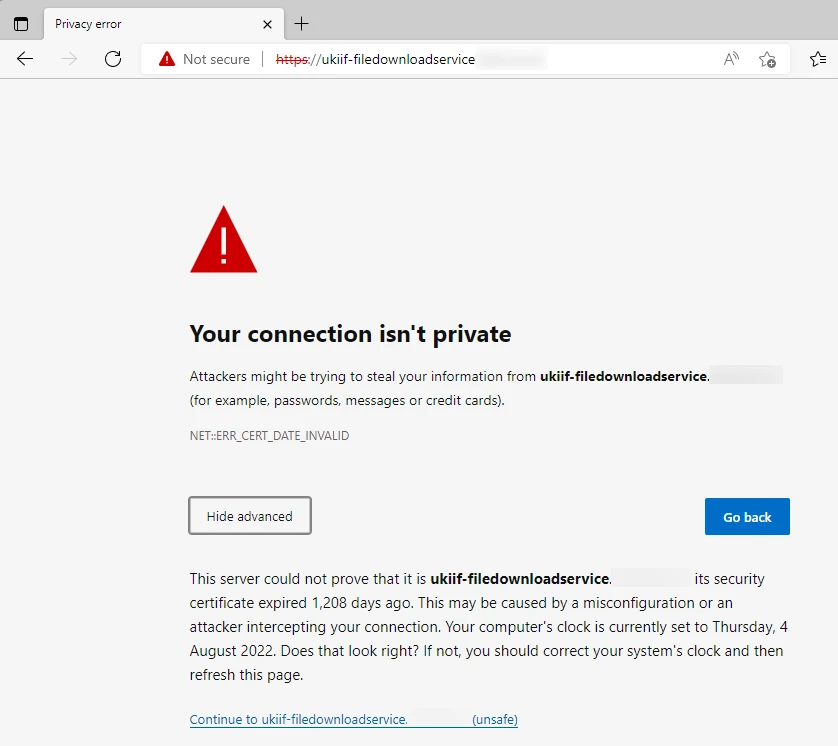

And lots of expired TLS certs:

Often these seemed to be from old domain registrations, so there wasn’t a website there any more. This at least means there’s no chance a customer could use it, but it’s still untidy.

Expired domains

And finally, we weren’t the first to spot some of these issues. Here’s one of three old domains spotted by the tool, which have already been hijacked. This is presumably the work of Jetp1ane aka James Barnett:

Conclusion

So what’s the conclusion here? Are all these big companies completed useless at cyber security? No. But do they all generally have things that can be improved and tidied up? Clearly, yes. Without wanting to end on a needlessly FUD-dy tone, what we can find passively within the CMA is the tip of the iceberg, and attackers can and do go further.

It’s also apparent that many big companies could benefit from an attack surface tool like FractalScan Surface, which is exactly why we built it!